Have you ever uploaded a photo to Facebook and found your friends already tagged in it?

That’s possible thanks to a technique called machine learning, which lets computers learn the same way humans do—through practice.

Machine learning programs look for patterns in pictures. They take a guess at what the image might be and check it against what a human has told them the picture actually is. If they guessed wrong, they slightly change the patterns they’re looking for so they get a better result next time.

Over thousands of rounds of trial and error, the program slowly gets better at guessing what it’s looking at.

For example, if you upload a picture of you and a mate, the program will take a guess at who’s in it based on older pictures they’ve been tagged in. If it guesses wrong, you’ll obviously correct it—and those corrections are what help the program get better for next time.

The problem is, once your program starts changing itself, it’s hard to understand how it works and even harder to fix any problems. That’s where Deep Dream comes in.

Looking under the hood

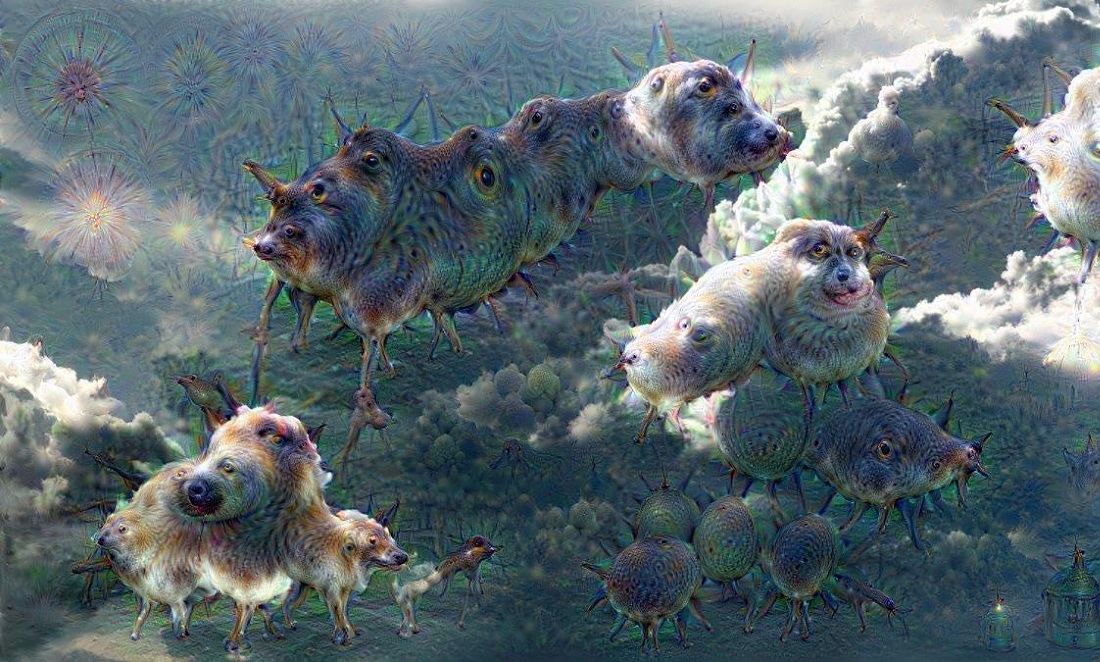

The Deep Dream software was developed by Google to give them a look through their software’s eyes.

Deep Dreams are like learning in reverse. They still take an image, look for patterns and make corrections, but rather than changing the program to match the image, they change the image to match the program. Eventually, the picture starts to look like whatever patterns the program is trained to detect.

This isn’t so different in humans.

We also have a pretty strong tendency to see the kinds of patterns we’re used to.

One particularly famous example of this is called pareidolia—the tendency to see faces in things. If you’ve ever seen faces in buildings or cars, spotted animals in clouds or seen the man in the Moon, you’re doing the same thing that Deep Dream does—seeing the patterns you’re used to when they aren’t really there.

INSIDE YOUR HEAD

Machine learning has been used to sort everything from LEGO bricks to galaxies. It’s a great way to process more data than a human could ever cope with.

But Deep Dream—invented as just a troubleshooting technique—has spawned some interesting uses of its own.

By training the program on a painting and having it ‘correct’ a photo, machine learning can learn to imitate a particular style. Using ‘style transfer’, as it’s called, we can see the world not just as a computer but as famous artists throughout history.

Researchers at CSIRO and MIT, meanwhile, are getting the public to give feedback on computer-generated horror images in an attempt to learn what makes a picture scary.

So, while we’re busy teaching computers, we might just end up learning something about ourselves too.